How many times do you hear the word “cloud” on a daily basis? What does it mean to you? Despite becoming a predominant buzzword, “cloud” means different things to different people, leading to confusion in many conversations. In talking with a multitude of customers, it’s becoming clear that many people view cloud computing as something that happens solely off-site, residing entirely at an external hosting provider. This assumption needs to be clarified, as cloud computing is meant to define a genre of architecture and operations, rather than just defining a location. The operational model is centered around consumption-based on-demand resources, automated workloads, and self-service provisioning – all of which can live entirely of-premises (public cloud), entirely on-premises (private cloud), or a combination thereof (hybrid cloud). Avi Networks brings these concepts to the load balancing space, easing the transition to cloud-like environments.

There are many reasons to go with any of the above approaches, but hybrid cloud is becoming an increasingly common deployment model, where companies utilize both internal cloud environments, as well as public cloud environments (Amazon AWS, Microsoft Azure, Google Cloud, etc). There are many advantages to such an approach, including flexibility, data privacy, and cost. However, managing such an environment can become cumbersome and challenging, given that you have to manage disparate environments with unique tooling per environment. Avi Vantage is designed in such a way to ease many of these administrative difficulties inherent to hybrid cloud, but also allows automation and integration capabilities which enable things like automatic scaling and cloudbursting.

For those unfamiliar, cloudbursting is a concept where an application is primarily delivered via an on-prem environment, hosted in a customer’s datacenter. When the requirements for that application spike, rather than housing idle capacity in the datacenter, additional resources are spun up in a public cloud environment to meet the temporary demand. When that spike of demand ends, the public cloud resources are spun back down, returning all application delivery responsibility back to the on-prem environment. This allows for the absorption of large, temporary spikes in traffic without having to pay for mostly idle resources in your datacenter.

How does Avi help with hybrid cloud and cloudbursting? Via multicloud support and application AutoScale.

Multicloud Support

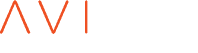

Avi Vantage has native support for multiple cloud environments from the same controller cluster, allowing for a true single pane of glass view into your load balancing environment, regardless where it lives. Say you have an on-prem VMWare environment, an on-prem Mesos/Marathon environment, and an off-prem Amazon AWS environment. A single controller cluster can deploy and manage load balancers in all those environments, offering the same feature set, ease of automation, and visibility across all your environments.

Why is this important? This approach greatly eases administrative difficulty in a hybrid cloud design. A single pane of glass and a standard toolset for deploying applications across all your environments, allowing for consistent processes across disparate ecosystems.

Application AutoScale

Avi Vantage gathers over 700 data points per second, continuously analyzing application performance. This information can be used to determine when back-end application resources are struggling to meet demand, and trigger scaling decisions. These scaling decisions can include scaling out resources on-prem, or spinning up resources off-prem and utilizing those resources to absorb the increased demand (cloudbursting). Some examples of when you might cloudburst:

When the average CPU across all servers in a pool exceeds 75%

When SSL TPS reaches 80% of defined capacity

When application throughput reaches 80% of maximum capacity

These are a tiny subset of the types of metrics you can get with Avi, and every metric can be used to trigger autoscale. A full list of the available metrics and events is below.

Metric-based alerts: http://kb.avinetworks.com/metrics-based-alerts/

Event-based alerts: http://kb.avinetworks.com/event-based-alerts/

Predictive AutoScale

Avi Vantage also has a way to utilize performance-based metrics to understand pre-emptively when load will exceed capacity, and scaleout proactively to meet anticipated demand – this is known as predictive autoscale. Predictive autoscale is based around learned application trends, as well as application response time as an indicator of capacity. Over time, Avi will baseline the performance of your application servers under normal conditions, and learn how they perform under typical load. As load increases, the system can detect when the application server is starting to respond more slowly, and learn it’s theoretical capacity based on the vast metrics available (throughput, connections per second, SSL TPS, etc). Additionally, Avi is able to learn capacity trends over time, and can utilize that information to preemptively meet anticipated demand, based on recurring, regular events.

Production-ready Hybrid Cloud Deployment with Avi and AWS

So, what would this actually look like in a production deployment? Let’s create a scenario to exemplify a very common use case I see with my customers – a hybrid cloud consisting of an on-prem VMWare environment, and public Amazon AWS environment where customers need premium elastic load balancing for AWS deployments. Applications are primarily delivered from the VMWare environment, with AWS used for cloudbursting during peak times of the day.

- Primary, on-prem: VMWare ESXi 6

- Secondary, off-prem: Amazon AWS

- Bursting options:

- Spin up new servers in AWS, and add to existing pool

- Spin up new SEs and servers in AWS, modify DNS to utilize both locations

Option 1: Spin up new servers in AWS, and add to existing pool

For simplicity of this blog, let’s focus on Option 1, and see what that would actually look like in terms of configuration.

Utilizing predictive autoscale, or Avi’s built-in events/metrics-based triggers, Vantage can determine when existing resource are no longer sufficient to handle the load into the application. In this case, let’s build our cloudbursting around true server health, based on Avi’s Health Score.

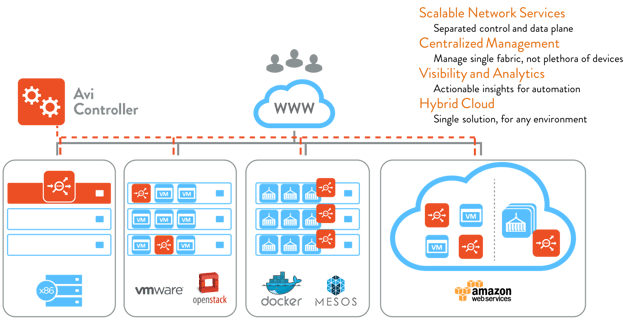

We can create a metrics-based trigger; that would look something like this:

When the average health of all servers in a pool goes below 50 over a 300-second sample, this event will trigger a sequence of events, resulting in scaling out the application resources, starting with the AutoScale template.

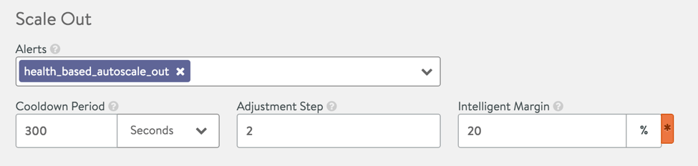

In the AutoScale template, we can configure an AutoScale alert, and define parameters to control the action:

You can see there is a 300 second cooldown period between scaleouts, preventing the system from over-scaling the environment. Additionally, we can define how many servers we want to scale up at a time – in this case; we’ll add two servers at a time (the min/max number of servers can be controlled elsewhere in this template).

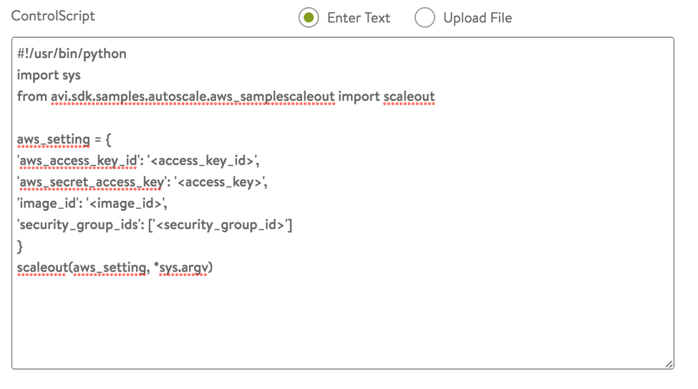

The AutoScale template will trigger a Control Script, which will contain the specifics on the scaling – instantiate the application images in AWS and add them to the existing pool once online. There is a script under the hood making the API calls to AWS, which is beyond the scope of this blog, but the config in the UI is quite simple:

Specific details on the underlying script requirements can be found via Avi’s GitHub repository: https://github.com/avinetworks/sdk.

At this point, your autoscale is configured and ready to go. You can setup similar configuration to scale your application resources back in, when they’ve exceeded an average health score of, say, 75. This configuration allows dynamic cloudbursting, only utilizing public cloud resources when necessary.

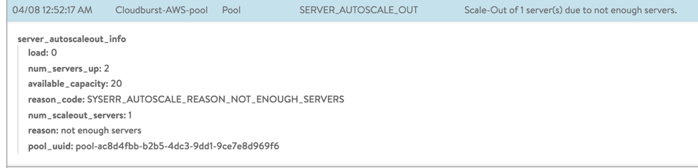

You’ll also get events reported anytime a scaleout/scalein action takes place, similar to the below:

These events can also be utilized to trigger internal alerting mechanisms within your organization if desired.

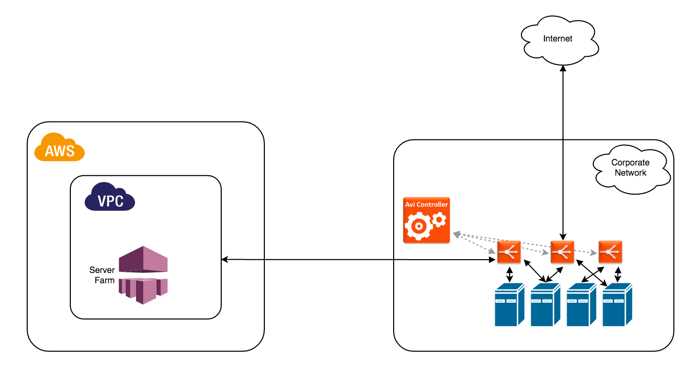

This end result of this scaleout action would look something like this:

Option 2: spin up SEs and servers in AWS

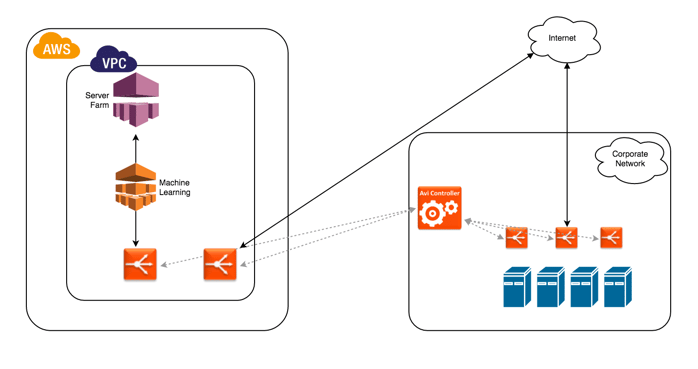

You may find yourself seeking a more comprehensive cloudbursting approach, where both SEs and application servers are spun up in your AWS environment. While I won’t get into the specifics here, the configuration would be very similar to Option 1 above. When your predefined autoscale trigger is hit, the below actions would occur:

- API calls would be made to the on-prem Avi Controller to instantiate SEs into AWS and place VS config onto them

- API calls will be made to AWS to create the application server instances

- Newly created application instances would be added to the pool on the new SEs

- API calls will be made to your DNS or GSLB environment to add the new VS IP to the appropriate configuration

At this point, you would have application resources running in both your on-prem VMWare environment, as well as your off-prem AWS environment, with each location taking traffic directly. That would look something like this:

Conclusion

Hybrid cloud is commonly thought of as the future of IT infrastructure, but you need to have the proper tools and software-defined infrastructure to get there. Avi Networks can help take your organization to this next level by utilizing our built-in AutoScale functionality, combined with our metrics and automation capabilities. Even if you're not using a hybrid cloud today, deploying Avi into your existing environment(s) will enable a natural extension into additional environments as needed.